Mend AI Red Teaming

Note:

AI Red Teaming is available only with Mend AI Premium.

The use of the service indicated under this page is subject to the terms and conditions set forth under our AI Supplemental Terms-of-Service.

Overview

Red Teaming is essentially prompt-based adversarial testing. It’s a practice where attacks on an organization's AI systems are simulated, to identify weaknesses.

Mend AI Red Teaming provides a single-pane-of-glass experience with dynamic AI Insights within the Mend AppSec Platform, enabling users to conduct red teaming and dynamic security tests specifically tailored for AI applications, enhancing the ability to identify, analyze, and mitigate vulnerabilities in AI systems.

It is designed to assist organizations in meeting the rigorous testing and vulnerability management standards set forth by the EU AI Act, CRA, and other global AI security frameworks.

Prerequisites

Note: The use of the service indicated under this page is subject to the terms and conditions set forth under our AI Supplemental Terms-of-Service.

Mend AI Red Teaming requires a Mend AI Premium subscription.

The following table lists the red teaming actions that users can perform, depending on their assigned roles in the Mend AppSec Platform:

Role | View Targets/Scans | Run Scan | Manage Targets | Delete Targets |

|---|---|---|---|---|

Admin | ✔️ | ✔️ | ✔️ | ✔️ |

Security Analyst | ✔️ | ✔️ | ❌ | ❌ |

Scan Manager | ✔️ | ✔️ | ✔️ | ❌ |

Member | ✔️ | ❌ | ❌ | ❌ |

Legal Analyst | ✔️ | ❌ | ❌ | ❌ |

Auditor | ✔️ | ❌ | ❌ | ❌ |

Note: Users can view their own targets and scans, in any context (Org/Application/Project).

Getting Started

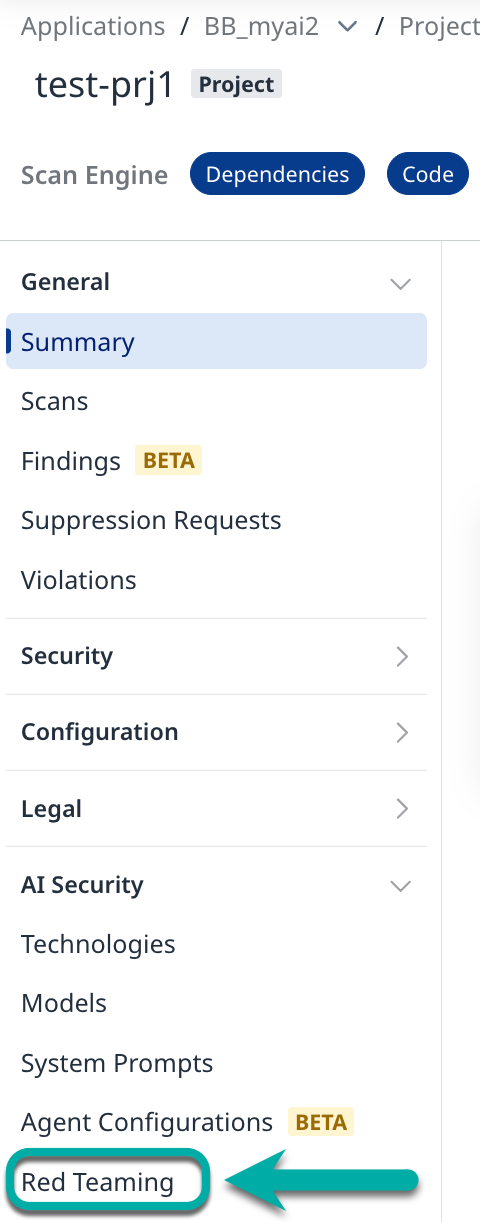

To set up or configure red teaming, navigate to your project in the Mend AppSec Platform and from the left-pane menu select Red Teaming.

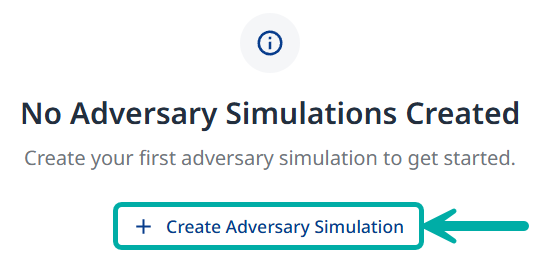

If you already have a target set up in the selected project, you will have the option to create a new one by clicking the + New Adversary Simulation button on the right.

If you don’t have a target set up in the selected project, click the + Create Adversary Simulation at the center of the screen.

Visit the Create Adversary Simulation page to proceed.